From boiling lead and black art: An essay on the history of mathematical typography

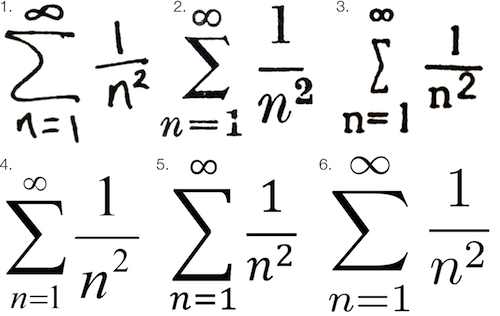

Math fonts from six different type systems, courtesy Chalkdust

Math fonts from six different type systems, courtesy Chalkdust

I’ve always felt like constructing printed math was much more of an art form than regular typesetting. Someone typesetting mathematics is less a “typist” and more an artist attempting to render abstract data on a two-dimensional surface. Mathematical symbols are themselves a language, but they are fundamentally a visual representation of human-conceived knowledge—knowledge that would be too inefficient to convey through verbal explanations. This brings the typesetting of mathematics closer to a form of data visualization than regular printed text.

No matter how hard it’s ever been to create printed text, creating printed math has always been even harder. In pre-digital times, equation-laden texts were known as “penalty copy” because of the significant additional time and expense it took to set math notation for printing presses.

Even when modern word processors like Microsoft Word include equation editors, they tend to be difficult to use and often produce unpleasing results. While LaTeX and similar variants produce the highest quality digital math type, these frameworks also have much more of a learning barrier than general word processing.

But these modern quibbles are much more the fault of hedonic adaption than any of the tools available to us today. We have it vastly easier than any previous stage of civilization, and I think it’s critically important for those of us that write math to have at least a basic awareness of the history of mathematical typesetting.

For me, knowing this history has had several practical benefits. It’s made me more grateful for the writing tools I have today—tools that I can use to simplify and improve the presentation of quantitative concepts to other actuaries. It’s also motivated me to continue to strive for elegance in the presentation of math—something I feel like my profession has largely neglected in the Microsoft Office era of the last twenty years.

Most importantly, it’s reminded me just how much of an art the presentation of all language has always been. Because pre-Internet printing required so many steps, so many different people, so much physical craftsmanship, and so much waiting, there were more artistic layers between the author’s original thoughts and the final arrangement of letters and figures on pages. More thinking occurred throughout the entire process.

To fully appreciate mathematical typography, we have to first appreciate the general history of typography, which is also a history of human civilization. No other art form has impacted our lives more than type.

The first two Internets

While the full history of printing dates back many more centuries, few would disagree that Johannes Gutenberg’s 15th-century printing press was the big bang moment for literacy. It was just as much of an Internet-like moment as the invention of the telegraph or the Internet itself.

Before Gutenberg, reading was the realm of elites and scholars. After Gutenberg, book production exploded, and reading became exponentially more practical to the masses. Literacy rates soared. Reformations happened.

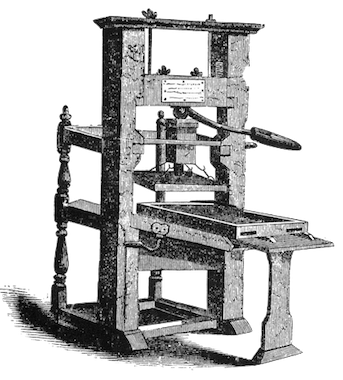

The Gutenberg Printing Press

The Gutenberg Printing Press

I would argue that the invention of the printing press was on par with the evolutionary “invention” of human language itself. In The Origins of Political Order, Francis Fukuyama explains that spoken language catalyzed the separation of humans from lower forms of primates:

The development of language not only permits the short-term coordination of action but also opens up the possibility of abstraction and theory, critical cognitive faculties that are unique to human beings. Words can refer to concrete objects as well as to abstract classes of objects (dogs, trees) and to abstractions that refer to invisible forces (Zeus, gravity).

Language also permits practical survival advantages for families and social groups:

By not stepping on the snake or eating the root that killed your cousin last week, you avoid being subject to the same fate, and you can quickly communicate that rule to your offspring.

Oral communication became not only a survival skill, but a tool of incredible influence. Rhetoric and the art of persuasion were highly valued in Greek and Roman societies.

If spoken language was the first human “Internet,” mass printing was the next key milestone in the democratization of human knowledge. Mass production of printed material amplified the human voice by incalculable orders of magnitude beyond oral communication.

Of boiling lead and black art

Like all inventors, Johannes Gutenberg didn’t really make anything new so much as he combined existing materials and technologies in new ways. Gutenberg didn’t invent printing. He didn’t invent the press. He didn’t even invent movable type, which typically involves arranging (typesetting) casts of individual letters that can be brushed or dipped in ink and pressed to a page.

Metal movable type arranged by hand

Metal movable type arranged by hand

Gutenberg’s key innovation was really in the typecasting process. Before Gutenberg’s time, creating letters out of metal, wood, and even ceramic was extremely time consuming and difficult to do in large quantities. Gutenberg revolutionized hot metal typesetting by coming up with an alloy mostly made of lead that could be melted and poured into a letter mold called a matrix. He also had to invent an ink that would stick to lead.

His lead alloy and matrix concepts are really the reasons the name Gutenberg became synonymous with printing. In fact, the lead mixture he devised was so effective, it continued to be used well into the 20th century, and most typecasting devices created after his time continued using a similar matrix case to mold type.

From a workflow perspective, Gutenberg’s innovation was to separate typecasting from typesetting. With more pieces of type available, simply adding more people to the process allowed for more typesetting. With more typeset pages available, printing presses could generate more pages per hour. And more pages, of course, meant more books.

But let’s not kid ourselves. Even post-Gutenberg, typesetting a single book was still an extremely tedious process. Gutenberg’s first masterpiece, the Gutenberg Bible (c. 1450s), was—and still is—considered a remarkable piece of art. It required nearly 300 pieces of individual type. Every upper and lower case instance of every letter and every symbol required its own piece of lead. Not only did each character have to be set individually by hand, justification required manual word spacing line by line.

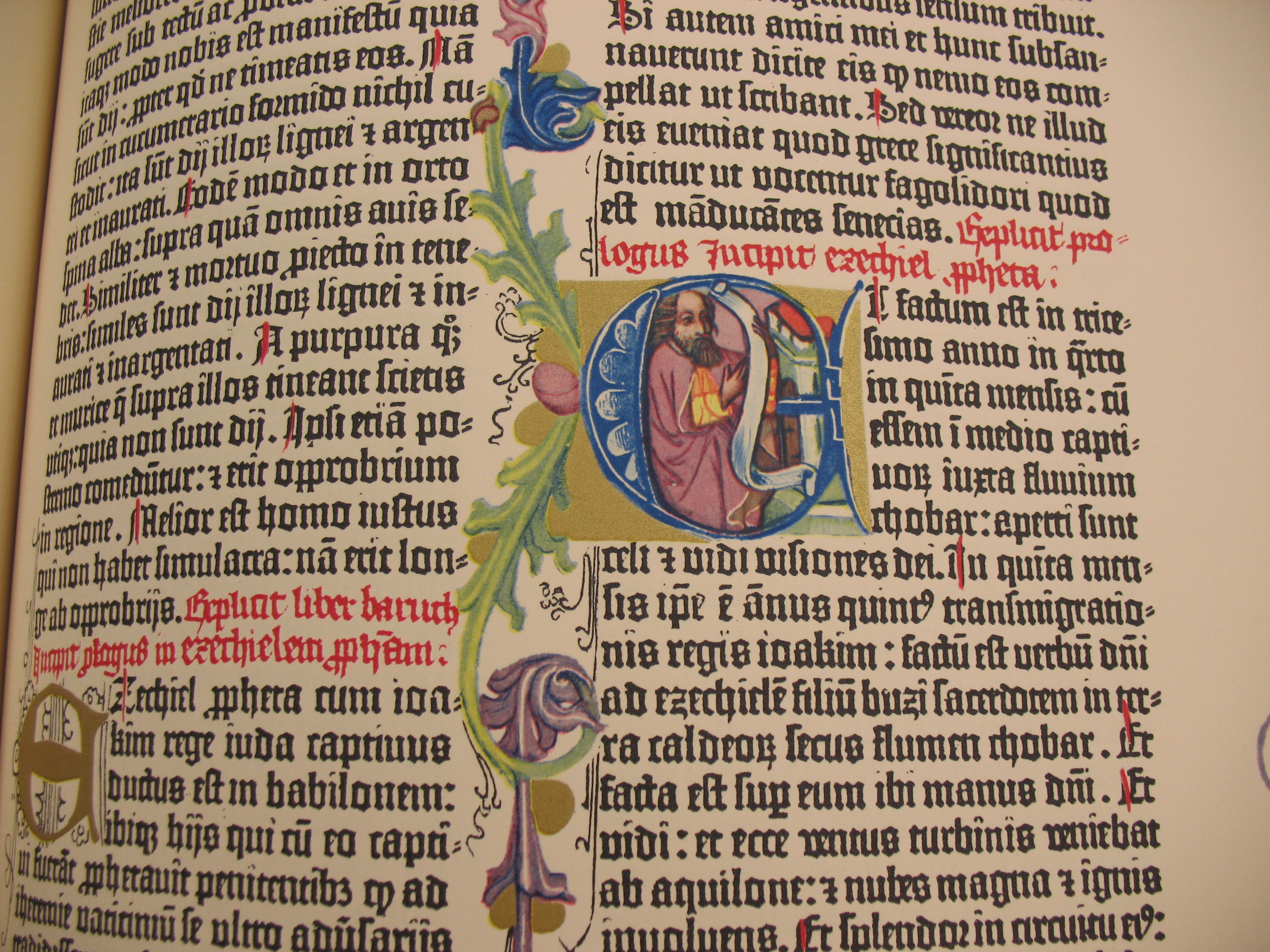

The Gutenberg Bible

The Gutenberg Bible

Even though Gutenberg’s innovations allowed books to be printed faster than ever before, it was an excruciating process by today’s one-click standard. But it was within those moments spent arranging characters and lines that the so called “black art” of book printing flourished. Typesetting even a basic text was an intimate, human process.

A better way to cast hot lead

The art of hand-setting type would be passed down from generation to generation for over 400 years until the Industrial Revolution began replacing human hands with machines in all aspects of life. The most famous of the late 19th century technologies to refine typesetting were Monotype and Linotype, both invented in America.

The Monotype System was invented by American-born Tolbert Lanston, and Linotype was invented by German immigrant Ottmar Mergenthaler. Both men improved on the system Gutenberg devised centuries earlier, but each added their own take on the art of shaping hot lead into type.

Because Linotype machines could produce entire fused lines of justified lead type at a time, they became extremely popular for most books, newspapers, and magazines. Just imagine the look on people’s faces when they were told they could stack entire lines of metal type rather than having to arrange each letter individually first!

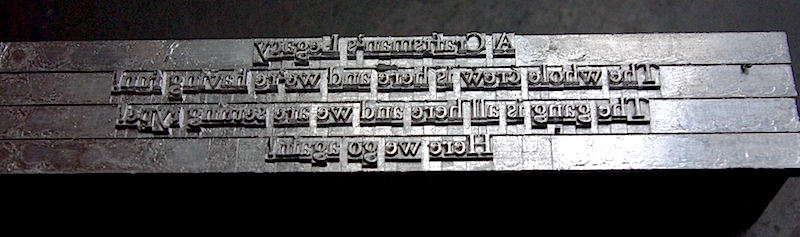

Four lines of Linotype, courtesy Deep Wood Press

Four lines of Linotype, courtesy Deep Wood Press

The Monotype System produced individual pieces of type. It could not produce the same lines per hour as Linotype, but it maintained the art and flexibility of setting individual pieces of type. Monotype also took a more mathematical approach to typesetting:

In many ways the key innovation in the Monotype System was not the mechanical device, ingenious as it was. To allow the casting of fully justified lines of type, Tolbert Lanston chose not to follow the path of Ottmar Merganthaler, who used tapered spacebands to create word spacing. He instead devised a unit system that assigned each character a value, from five to eighteen, that corresponded to its width. A lower case “i”, or a period would be five units, an uppercase “W” would be eighteen. This allowed the development of the calculating mechanism in the keyboard, which is central to the sophistication of Monotype set matter. (Letterpress Commons)

And so it was fitting that Monotype, while slower than Linotype, offered more sophistication and ended up a favorite for mathematical texts and publications containing non-standard characters and symbols.

The Monotype System is an exquisite piece of engineering, and in many ways represents a perfection of Gutenberg’s original workflow using Industrial Age technology. It’s also a fantastic example of early “programming” since it made use of hole-punched paper tape to instruct the operations of a machine—an innovation that many people associate with the rise of computing in the mid-20th century, but was in use as early as 1725.

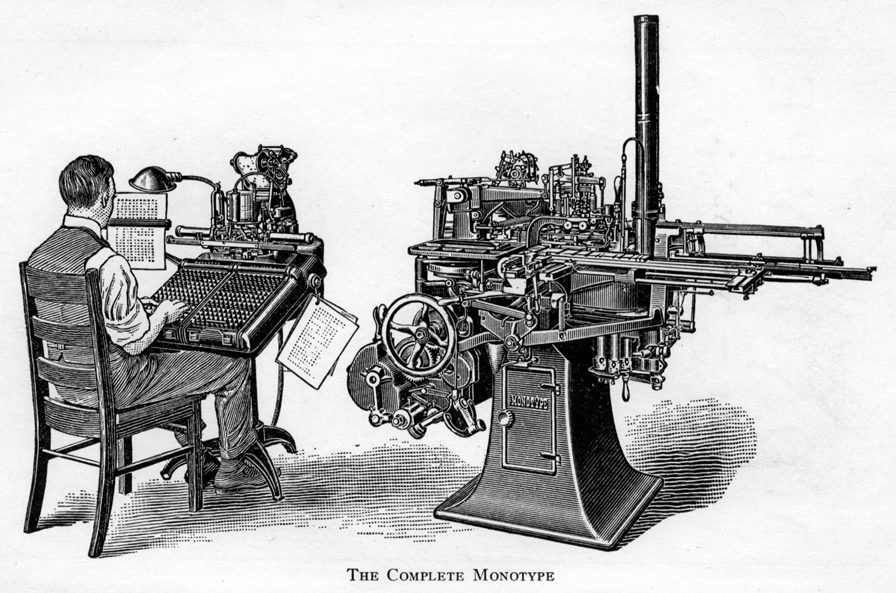

Like Gutenberg, Lanston sought to refine the workflow of typesetting by dividing it into specialized sub-steps. The Monotype System consisted of two machines: a giant keyboard and type caster.

The keyboard had distinct keys for different cases of letters, numbers, and common symbols. The keyboard operator’s job was essentially to type character-by-character and make decisions about where to end lines. Once a line was ended, the machine would calculate the word spacing required to justify the line and punch holes into the paper tape. The caster was designed to read the hole patterns to determine how to actually cast the lines.

Therefore, a print shop could accelerate the “input” phase of typecasting by simply adding more keyboards (and people) to the process. This was a significant improvement over hand setting because a keyboard operator could generate more tape per hour than a human compositor could arrange type by hand.

The caster machine was also very efficient. As it read the tape line by line, it would inject hot, liquid lead into each type matrix, then output water-cooled type into a galley, where it came out pre-assembled into justified lines.

At this stage, the Monotype System offered a major advantage over Linotype. If a compositor—or anyone proofing the galley— found an error, the type could be fixed by hand with relative ease (especially if only a single character needed correcting).

It’s also easy to see why Monotype was superior to Linotype for technical writing, including mathematics. Even though the Monotype keyboard had tons of keys and could be modified for special purposes, it wasn’t designed to generate mathematical notation.

As I said earlier, no matter how hard it’s ever been to create text, creating math has always been even harder. Daniel Rhatigan:

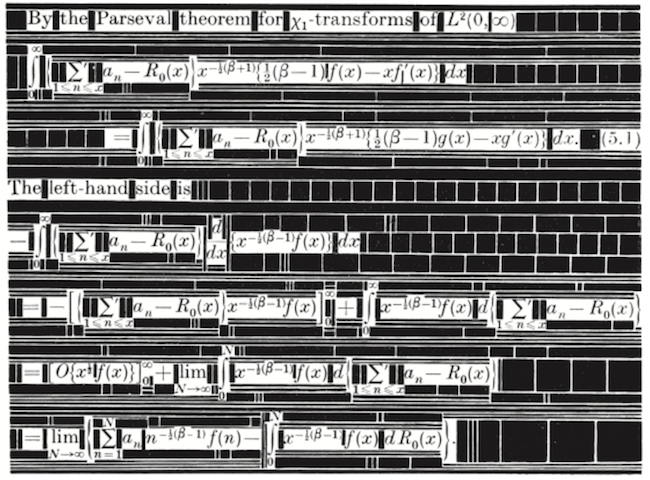

Despite the efficiency of the standard Monotype system, mechanical composition could only accommodate the most basic mathematical notation. Simple single-line expressions might be set without manual intervention, but most maths call for a mix of roman and italic characters, numerals, Greek symbols, superior and inferior characters, and many other symbols. To ease the process, printers and Monotype itself often urged authors to use alternate forms of notation that could be set more easily, but the clarity of the subject matter often depended on notation that was more difficult to set.

Even if there were room in the matrix case for all the symbols needed at one time, the frequent use of oversize characters, strip rules, and stacked characters and symbols require type set on alternate body sizes and fitted together like a puzzle. This wide variety of type styles and sizes made if [sic] costly to set text with even moderately complex mathematics, since so much time and effort went into composing the material by hand at the make-up stage.

The complex arrangement of characters and spaces required to compose mathematics with metal type, courtesy The Printing of Mathematics (1954)

The complex arrangement of characters and spaces required to compose mathematics with metal type, courtesy The Printing of Mathematics (1954)

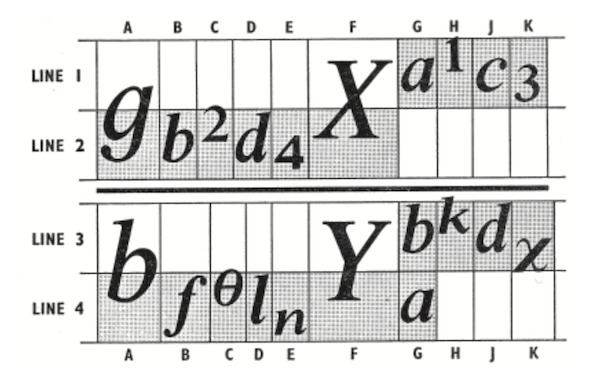

While the Monotype System would never fully displace the hand composition of math, UK-based Monotype Corporation made great strides toward this end in the 1950s with a new 4-line system for setting equations. The 4-line system essentially divided the standard equation line into four regions: regions one and two were in the upper half of the line, while regions three and four were in the lower half. It also allowed for a thin, two-point-high strip between the second and third regions. This middle strip was exactly the height of a standard equals sign (=) and was a key feature distinguishing Monotype’s 4-line system from the competing “Patton method” for 4-line math equations developed in the U.S.

The 4-line system, via Daniel Rhatigan in “The Monotype 4-Line System for Setting Mathematics”

The 4-line system, via Daniel Rhatigan in “The Monotype 4-Line System for Setting Mathematics”

While Monotype’s 4-line system would standardize mathematical typography more than ever before, allowing for many math symbols to be set using a modified Monotype keyboard, it would prove to be the “last hoorah” for Monotype’s role in mathematical typography—and more generally, the era of hot metal type. Roughly a decade after the 4-line system was put into production, type would go cold forever.

The typewriter compromise

The 20th century, particularly post-World War II, saw an explosion in scientific literature, not just in academia but in the public and private sector as well. Telecommunications booms and space races don’t happen without a lot of math sharing.

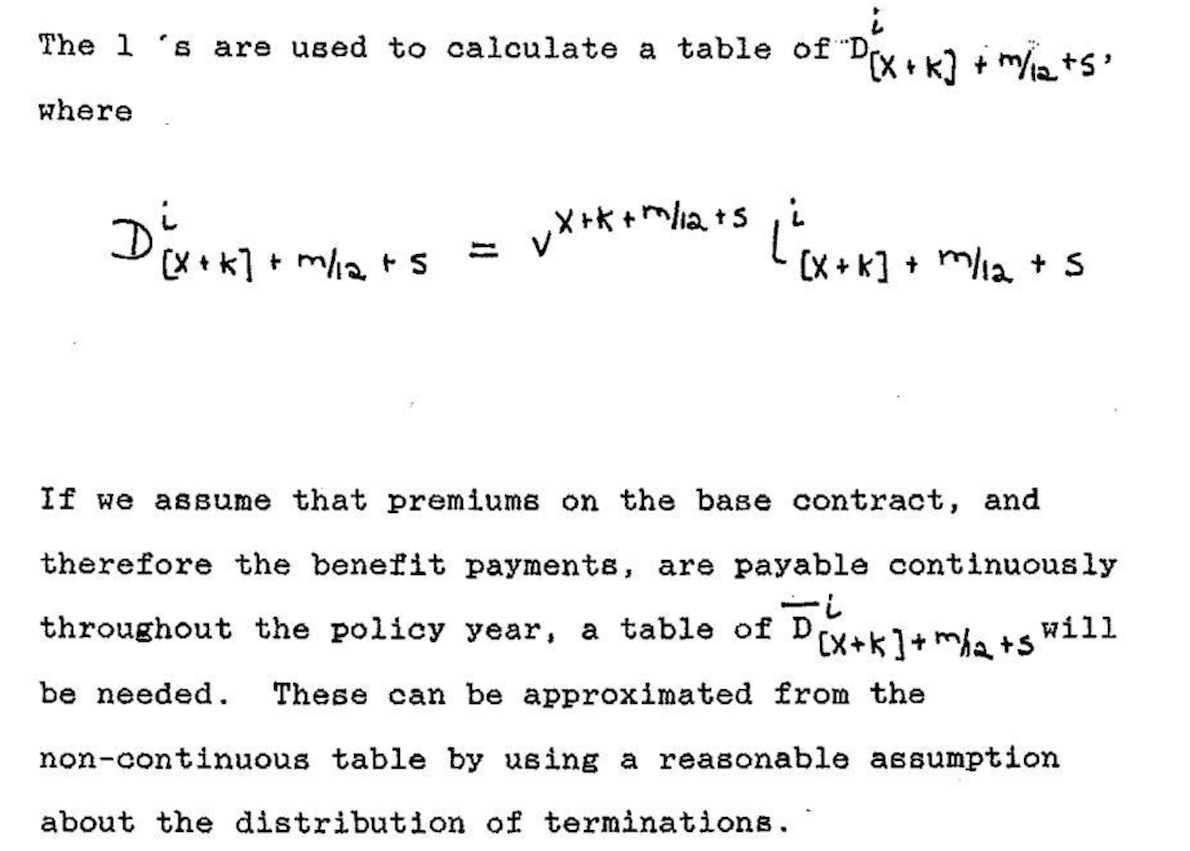

Monotype was only a solution for publications worth the cost of sending to a printing press. Many technical papers were “printed” using a typewriter. Equations could either be written in by hand or composed on something like an IBM Selectric typewriter, which became very popular in the 1960s. Typewriters were office mainstays well into the late 20th century.

An actuarial paper composed by typewriter with handwritten math (1989)

An actuarial paper composed by typewriter with handwritten math (1989)

Larger departments at businesses and universities not only had legions of secretarial workers capable of typing papers, but many had technical typists as well. Anecdotes like this one from a Math Overflow commenter, Peter May, highlight the daily struggles that took place:

At Chicago in the 1960’s and 1970’s we had a technical typist who got to the point that he, knowing no mathematics, could and did catch mathematical mistakes just from the look of things. He also considered himself an artist, and it was a real battle to get things the way you and not he wanted them.

The Selectric’s key feature was a golf ball-sized typeball that could be interchanged. One of the typeballs IBM made contained math symbols, so a typist could simply swap out typeballs as needed to produce a paper containing math notation. However, the printed results were arguably worse aesthetically than handwritten math and not even comparable to Monotype.

An equation composed on an IBM Selectric typewriter, courtesy Nick Higham

An equation composed on an IBM Selectric typewriter, courtesy Nick Higham

Molding at the speed of light

As the second half of the 20th century progressed, technological progress would make it easier and easier to indulge those who preferred speed to aesthetics. In the 1960s, phototypesetting—which was actually invented right after World War II but had to “wait” on several computer-era innovations to fully come of age—rapidly replaced hot lead and metal matrixes with light and film negatives.

Every aspect of phototypesetting was dramatically faster than hot metal type setting. As phototypesetting matured, text could be entered on a screen rather than the traditional keyboarding process required for Monotype and Linotype. This made it much easier to catch errors during the keyboarding process.

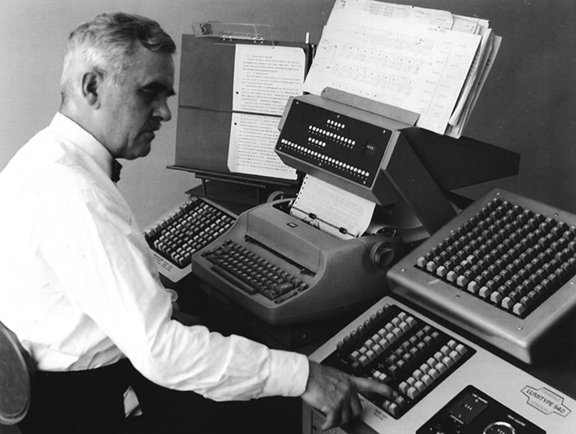

A man operating a Lumitype 450, a popular phototypesetting machine in the 1960s

A man operating a Lumitype 450, a popular phototypesetting machine in the 1960s

Phototypesetters could generate hundreds of characters per second by rapidly flashing light through the film negative matrix. And instead of arranging lead galleys of type, compositors began arranging what were essentially photographs of text.

Phototypesetting also offered more flexibility. With a Monotype or Linotype machine, font sizes were constrained by the physical size of the matrix. Such physical constraints don’t apply to light, which could easily be magnified in a phototypesetter to create larger versions of characters.

Even though Monotype would linger into the 1980s in extremely limited use, it was essentially extinct by the mid-1970s. The allure of phototypesetting’s speed and low cost was impossible for print companies to resist.

Phototypesetting was indeed the new king of typography—but it would prove to be a mere figure head appointed by the burgeoning computer age. As we all know now, anything computers can make viable, they can also replace. Clark Coffee:

Without a computer to drive them, phototypesetters are just like the old Linotype machines except that they produce paper instead of lead. But, with a computer, all of the old Typesetters’ decisions can be programmed. We can kern characters with abandon, dictionaries and programs can make nearly all hyphenations correctly, lines and columns can be justified, and special effects like dropped capitals become routine.

In the late 1970s, computers had become advanced enough to do such things, but of course computers, themselves, don’t want to make art. Computers need instructions from artists. Fortunately for all of us, there was such an artist with the programming chops and passion to upload the art of typesetting into the digital age.

A new matrix filled with ones and zeros

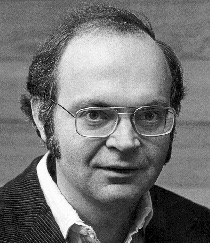

While many probably looked at photo-composed typography with indifference, one man did not. It just so happened that there as a brilliant mathematician and computer scientist that cared a lot about how printed math looked.

Donald Knuth, a professor of computer science at Stanford University, was writing a projected seven-volume survey entitled The Art of Computer Programming. Volume 3 was published in 1973, composed with Monotype. By then, computer science had advanced to the point where a revised edition of volume 2 was in order but Monotype composition was no longer possible. The galleys returned to Knuth by his publisher were photocomposed. Knuth was distressed: the results looked so awful that it discouraged him from wanting to write any more. But an opportunity presented itself in the form of the emerging digital output devices—images of letters could be constructed of zeros and ones. This was something that he, as a computer scientist, understood. Thus began the development of TeX. (Barbara Beeton and Richard Palais)

Donald Knuth (1970s)

Donald Knuth (1970s)

By 1978, Knuth was ready to announce TeX (“tek”1) to the world at the annual meeting of the American Mathematical Society (AMS). In his lecture, subsequently published by the American Mathematical Society in March 1979, Knuth proclaimed that:

Mathematics books and journals do not look as beautiful as they used to. It is not that their mathematical content is unsatisfactory, rather that the old and well-developed traditions of typesetting have become too expensive. Fortunately, it now appears that mathematics itself can be used to solve this problem. (AMS)

The gravity of this assertion is difficult to appreciate today. It’s not so much a testament to Knuth’s brilliance as mathematician and computer scientist—there were certainly others in the 1970s with comparable math and computer skills.2 What makes Knuth’s role in typographical history so special was just how much he cared about the appearance of typography in the 1970s—and the fact that he used his technical abilities to emulate the art he so appreciated from the Monotype era.

This was not a trivial math problem:

The [hot lead era] Typesetter was solely responsible for the appearance of every page. The wonderful vagaries of hyphenation, particularly in the English language, were entirely in the Typesetter’s control (for example, the word “present” as a noun hyphenates differently than the same word as a verb). Every special feature: dropped capitals, hyphenation, accented characters, mathematical formulas and equations, rules, tables, indents, footnotes, running heads, ligatures, etc. depended on the skill and esthetic judgment of the Typesetter. (Clark Coffee)

Knuth acknowledges that he was not the first person to engineer letters, numbers, and symbols using mathematical techniques. Others had attempted this as early as the 15th century, but they were constrained by a much simpler mathematical toolbox (mainly lines and circles) that simply could not orchestrate the myriad nuances of fine typography.

By the 1970s, however, there were three key innovations available for Knuth to harness. First, math had become far more sophisticated: cubic splines made it possible to define precise formulas for any character shape. Second, computers made it possible to program Knuth’s formulas for consistent repetition. Computers also made it possible to loop through lines of text, making decisions about word spacing for line justification—even retrospectively hyphenating words to achieve optimal word spacing within a paragraph. Third, digital printing had become viable, and despite Knuth’s highly discerning tastes, he was apparently satisfied with its output.

In Knuth’s words:

… I was quite skeptical about digital typography, until I saw an actual sample of what was done on a high quality machine and held it under a magnifying glass: It was impossible to tell that the letters were generated with a discrete raster! The reason for this is not that our eyes can’t distinguish more than 1000 points per inch; in appropriate circumstances they can. The reason is that particles of ink can’t distinguish such fine details—you can’t print the edge of an ink line that zigzags 1000 times on the diagonal of a square inch, the ink will round off the edges. In fact the critical number seems to be more like 500 than 1000. Thus the physical properties of ink cause it to appear as if there were no raster at all.

Knuth was certain that it was time to help typography leap over phototypesetting—from matrices of hot lead to pages of pixels.

While developing TeX and Metafont, I’m sure Knuth had several “this has to be the future” moments—probably not unlike Steve Jobs standing over the first Apple I prototype in a California garage only a year or two earlier. Indeed, just like other more celebrated Jobsian innovators of the late 20th century, Knuth’s creative energy was driven by the future he saw for his innovation:

Within another ten years or so, I expect that the typical office typewriter will be replaced by a television screen attached to a keyboard and to a small computer. It will be easy to make changes to a manuscript, to replace all occurrences of one phrase by another and so on, and to transmit the manuscript either to the television screen, or to a printing device, or to another computer. Such systems are already in use by most newspapers, and new experimental systems for business offices actually will display the text in a variety of fonts. It won’t be long before these machines change the traditional methods of manuscript preparation in universities and technical laboratories.

Today, we take it for granted that computers can instantly render pretty much anything we can dream up in our minds, but this was closer to science fiction in the late 1970s. While the chief goal of TeX was to use mathematics to automate the setting of characters in the output, he also wanted the input to be as pleasing and logical as possible to the human eye.3

For example, the following TeX syntax:

$y = \sqrt{x} + {x - 1 \over 2}$

will render:

\[y = \sqrt{x} + {x - 1 \over 2}\]TeX was a remarkable invention, but its original form could only be used in a handful of locations—a few mainframe computers here and there. What really allowed TeX to succeed was its portability—something made possible by TeX82, a second version of TeX created for multiple platforms in 1982 with the help of Frank Liang. With TeX82, Knuth also implemented a device independent file format (DVI) for TeX output. With the right DVI driver, any printer could read the binary instructions in the DVI file and translate it to graphical (print) output.

Knuth would only make one more major update to TeX in 1989: TeX 3.0 was expanded to accept 256 input characters instead of the original 128. This change came at the urging of TeX’s rapidly growing European user base who wanted the ability to enter accented characters and ensure proper hyphenation in non-English texts.

Except for minor bug fixes, Knuth was adamant that TeX should not be updated again beyond version 3:

I have put these systems into the public domain so that people everywhere can use the ideas freely if they wish. I have also spent thousands of hours trying to ensure that the systems produce essentially identical results on all computers. I strongly believe that an unchanging system has great value, even though it is axiomatic that any complex system can be improved. Therefore I believe that it is unwise to make further “improvements” to the systems called TeX and METAFONT. Let us regard these systems as fixed points, which should give the same results 100 years from now that they produce today.

This level of restraint was as poetic as Knuth’s work to save the centuries-old art of mathematical typography from the rapidly-changing typographical industry. Now that he had solved the mathematics of typography, he saw no reason to disrupt the process solely for the sake of disruption.

Some thirty years after TeX 3.0 was released, its advanced line justification algorithm still runs circles around other desktop publishing tools. There is no better example than Roel Zinkstok’s comparison of the first paragraph of Moby Dick set using Microsoft Word, Adobe InDesign, and pdfLaTeX (a LaTeX macro package that outputs TeX directly to PDF).

Following 3.0, Knuth wanted point release updates to follow the progression of π (the current version is 3.14159265). Knuth also declared that on his death, the version number should be permanently set to π. “From that moment on,” he ordained “all ‘bugs’ will be permanent ‘features.’”

Refining content creation

In The TeXbook, Knuth beautifully captures the evolutionary feedback loop between humans and technological tools of expression:

When you first try to use TeX, you’ll find that some parts of it are very easy, while other things will take some getting used to. A day or so later, after you have successfully typeset a few pages, you’ll be a different person; the concepts that used to bother you will now seem natural, and you’ll be able to picture the final result in your mind before it comes out of the machine. But you’ll probably run into challenges of a different kind. After another week your perspective will change again, and you’ll grow in yet another way; and so on. As years go by, you might become involved with many different kinds of typesetting; and you’ll find that your usage of TeX will keep changing as your experience builds. That’s the way it is with any powerful tool: There’s always more to learn, and there are always better ways to do what you’ve done before.

Even though TeX itself was frozen at version 3, that didn’t stop smart people from finding better ways to use it. TeX 3 was extremely good at typesetting, but its users still had to traverse a non-trivial learning curve to get the most out of its abilities, especially for complex documents and books. In 1985, Leslie Lamport created LaTeX (“lah-tek” or “lay-tek”) to further streamline the input phase of the TeX process. LaTeX became extremely popular in academia in the 1990s, and the current version (originally released in 1994) is still the “side” of TeX that the most TeX users see today.

LaTeX is essentially a collection of TeX macros that make creating the content of a TeX document more efficient and make the necessary commands more concise. In doing this, LaTeX brings TeX even closer to the ideal of human-readable source content, allowing the writer to focus on the critically important task of content creation before worrying about the appearance of the output.

LaTeX refined the visual appearance of certain math syntax by adding new commands like \frac, which makes it easier to discern the numerator from the denominator in a fraction. So with LaTeX, we would rewrite the previous equation in this form:

$y = \sqrt{x} + \frac{x - 1}{2}$

LaTeX also added many macros that make it easier to compose very large documents and books. For example, LaTeX has built-in \chapter, \section, \subsection, and even \subsubsection commands with predefined (but highly customizable) formatting. Commands like these allow the typical LaTeX user to avoid working directly with the so-called “primitives” in TeX. Essentially, the user instructs LaTeX, and LaTeX instructs TeX.

LaTeX’s greatest power of all, however, is its extensibility though the packages developed by its active “super user” base. There are thousands of LaTeX packages in existence today and most of them come pre-installed with modern TeX distributions like TeX Live. There are multiple LaTeX packages to enable and extend every conceivable aspect of document and book design—from math extensions that accommodate every math syntax under the sun (even actuarial) to special document styles to powerful vector graphics packages like PGF/TiKZ. There is even a special document class called Beamer that will generate presentation slides from LaTeX, complete with transitions.

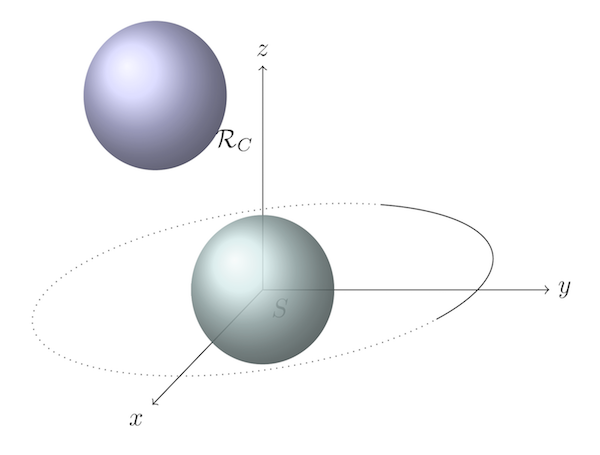

A 3D vector image created with PGF/TiKZ

A 3D vector image created with PGF/TiKZ

Collectively, these packages, along with the stable underlying code base of TeX, make LaTeX an unrivaled document preparation and publishing system. Despite the popularity of WYSIWYG word processors Microsoft Word since the 1990s, they can’t come close to the power of LaTeX or the elegance of its output.

It’s worth noting that LaTeX isn’t the only macro layer available for TeX. ConTeXt and others have their own unique syntax to achieve the same goals.

Beyond printed paper

As sophisticated as TeX was, it filled the same role that typecasting and typesetting machines had since Gutenberg’s time: TeX’s job was to tell a printer how to arrange ink on paper. Beginning with TeX82, this was accomplished with a special file format Knuth created called DVI (device independent format). While the TeX file was human-readable, DVI was only printer-readable: essentially a bit matrix that told the printer which pixels should be black and which should remain white.

Even though computers began radically changing the print industry starting in the 1970s, paper would remain the dominant medium on which people read print through the end of the 20th century. But things began changing irreversibly in the 1990s. Computer screens were getting better and more numerous. The Internet also made it easier than ever to share information among computers. It was only natural that people began not just “computing” on computer screens, but also reading more and more on computer screens.

In 1993, Adobe unveiled a new portable device format (PDF) in an attempt to make cross-platform digital reading easier. PDF was essentially a simplified version of Adobe’s popular desktop publishing file format, PostScript, but unlike PostScript, PDF was designed to be easier to read on a screen.

PDF would spend most of the 1990s relatively unknown to most people. It was a proprietary format that not only required a several-thousand-dollar investment in Adobe Acrobat software to create, it also required a $50 Adobe Acrobat Reader program to view. Adobe later made Acrobat Reader available for free, but the proprietary nature of PDF and relatively limited Internet connectivity of the early 1990s didn’t exactly provide an environment for PDF to flourish.

By the late 1990s, however, PDF had gotten the attention of Hàn Thế Thành, a graduate student who wanted to use TeX to publish his master’s thesis and Ph.D. dissertation directly to PDF. Thành applied his interest in micro-typography to create pdfTeX, a version of TeX capable of typesetting TeX files directly to PDF without creating a DVI file at all.

pdfTeX preserved all of the typographical excellence in TeX and also added a number of micro-typographical features that can be accessed through the LaTeX microtype package. Micro-typography deals with the finer aspects of typography, including Gutenberg-inspired ways of optimizing the justification of lines—like using multiple versions of the same glyph and hanging punctuation techniques.

pdfTeX also harnessed the digital features of PDF, like hyperlinking and table of contents structures. As the general popularity of PDF continued to grow into the 2000s, and once Adobe released the PDF standard to the International Organization for Standardization in 2007, pdfTeX became an essential version of TeX. Today it is included by default in any standard TeX package along with pdfLaTeX, which interprets LaTeX files for the pdfTeX program.

It’s worth recognizing that Donald Knuth did not create TeX to speed up the publishing process. He wanted to emulate the appearance of Monotype using mathematics. But with the evolution of LaTeX, pdfTeX, and the Internet, TeX ended up enabling what probably seemed unimaginable to anyone waiting weeks for their galley proofs to come in the mail before the 1970s. Today, thanks to TeX and modern connectivity, we can publish extremely sophisticated documents for a nearly unlimited audience in a matter of seconds.

The next innovation in typography: slowing down

I think a lot of people have this idea that pure mathematics is the polar opposite of art. A left brain versus right brain thing, if you will. I actually think that math’s role in the human experience requires artistry as much as logical thinking: logic to arrive at the mathematical truths of our universe and artistry to communicate those truths back across the universe.

As George Johnson writes in Fire in the Mind:

… numbers, equations, and physical laws are neither ethereal objects in a platonic phantom zone nor cultural inventions like chess, but simply patterns of information—compressions—generated by an observer coming into contact with the world… The laws of physics are compressions made by information gatherers. They are stored in the forms of markings—in books, on magnetic tapes, in the brain. They are part of the physical world.

Our ability to mark the universe has greatly expanded since prehistoric people first disturbed the physical world with their thoughts on cave walls. For most of recorded history, writing meant having to translate thoughts through lead, ink, and paper. Untold numbers of highly skilled people were involved in the artistry of pre-digital typesetting. Even though their skills were made obsolete by technological evolution, we can be thankful that people like Donald Knuth fossilized typographical artistry in the timelessness of mathematics.

And so here we are now—in a time when written language needs only subatomic ingredients like electricity and light to be conveyed to other human beings. Our ability to “publish” our thoughts is nearly instantaneous, and our audience has become global, if not universal as we spill quantum debris out into the cosmos.

Today, faster publishing is no longer an interesting problem. It’s an equation that’s been solved—it can’t be reduced further.

As with so many other aspects of modern life, technology has landed us in an evolutionarily inverted habitat. To be physiologically healthy, for example, we have to override our instincts to eat more and rest. When it comes to publishing, we now face the challenge of imposing more constraint on the publishing process for the sake of leaner output and the longevity of our thoughts.

For me, this is where understanding the history of printing and typography has become a kind of cognitive asset. These realizations have made me resist automation a bit more and actually welcome friction in the creative processes necessary even for technical writing. It’s also helped me justify spending more time, not less, in the artistic construction of mathematical formulas and the presentation of quantitative information in general.

Technological innovation, in the conventional sense, won’t help us slow the publishing process back down. Slowing down requires better thought technology. It requires a willingness to draft for the sake of drafting. It requires throwing away most of what we think because most of our thoughts don’t deserve to be read by others. Most of our thoughts are distractions—emotional sleights of the mind that trick us into thinking we care about something that we really don’t—or that we understand something that we really don’t.

Rather than trying to compress our workflows further, we need to factor the art of written expression back into thinking, writing, and publishing, with the latter being the hardest to achieve and worthy of only the purest thoughts and conclusions.

-

TeX is pronounced “tek” and is an English representation of the Greek letters τεχ, which is an abbreviation of τέχνη (or technē). Techne is a Greek concept that can mean either “art” or “craft,” but usually in a the context of a practical application. ↩

-

One noteworthy TeX predecessor was

eqn, a syntax that was designed to format equations for printing introff, which was a system developed by AT&T Corporation for the Unix in the mid-1960s. Theeqnsyntax for mathematics notation has similarities with TeX, leading some to speculate thateqninfluenced Knuth in his development of TeX. We do know that Knuth was aware oftroffenough to have an opinion of it—and not a good one. See p. 349 of TUGBoat, Vol. 17 (1996), No. 4 for more. Thanks to Duncan Agnew for bringingtroffto my attention and also pointing out that it was later replaced bygroff, which writes PostScript and is included in modern Unix-based systems (even macOS) and can be found via the man pages. Remarkably, it can still taketroff-based syntax developed in the 1970s and typeset it without any alterations. ↩ -

Knuth’s philosophy that computer code should be as human-readable and as self-documenting as possible also lead him to develop literate programming, a pivotal contribution to computer programming that has impacted every mainstream programming language in use today. ↩